- #1

Norseman

- 24

- 2

Context:

I'm making a fairly simple 2D game with spaceships that build up velocity, with no artificial speed limits. This means they go very fast, so they tend to go off screen unless I zoom out a lot. When they go off screen, I want an arrow at the edge of the screen to point in their direction so that I still know how many ships there are and where to find them. The screen has four corners along its edge, and I need to know which edge to draw the arrow on. That's when the Fun began.

The problem:

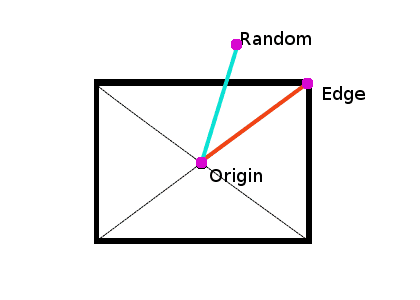

Let's say we've got an Origin point and two destination points, the Edge point, and the Random point. Angle A, is the angle from the Origin point to the Edge point and angle B is the angle from the Origin Point to the Random Point. We want to know if angle A is greater than angle B.

In the example seen above, the angle between the Origin and Edge point is probably a little under 90°, and the angle between the Origin and Random point is probably around 100°. This is obviously quite easy to work out, since 100° > 90°. My problem starts when the Random Point is at 359°. It's very close to 1°, so, intuitively, we'd say that it's less than 90°. But the computer disagrees. 359° > 90°.

To fix this, I'm going to have to redefine how we determine if one angle is larger than another. To compare angle A to angle B, I need to determine if angle B is closer in a clockwise direction or in a counter-clockwise direction. If it's closer in a clockwise direction, then it's larger. If it's closer in a counter-clockwise direction, then it's smaller.

I'm not sure that I'm doing this right at all, and I also have another problem. Is 180° > 0°, or is it less than 0°? It's obviously not equal. Perhaps I need some third status?

Quick summary:

I'm making a fairly simple 2D game with spaceships that build up velocity, with no artificial speed limits. This means they go very fast, so they tend to go off screen unless I zoom out a lot. When they go off screen, I want an arrow at the edge of the screen to point in their direction so that I still know how many ships there are and where to find them. The screen has four corners along its edge, and I need to know which edge to draw the arrow on. That's when the Fun began.

The problem:

Let's say we've got an Origin point and two destination points, the Edge point, and the Random point. Angle A, is the angle from the Origin point to the Edge point and angle B is the angle from the Origin Point to the Random Point. We want to know if angle A is greater than angle B.

In the example seen above, the angle between the Origin and Edge point is probably a little under 90°, and the angle between the Origin and Random point is probably around 100°. This is obviously quite easy to work out, since 100° > 90°. My problem starts when the Random Point is at 359°. It's very close to 1°, so, intuitively, we'd say that it's less than 90°. But the computer disagrees. 359° > 90°.

To fix this, I'm going to have to redefine how we determine if one angle is larger than another. To compare angle A to angle B, I need to determine if angle B is closer in a clockwise direction or in a counter-clockwise direction. If it's closer in a clockwise direction, then it's larger. If it's closer in a counter-clockwise direction, then it's smaller.

I'm not sure that I'm doing this right at all, and I also have another problem. Is 180° > 0°, or is it less than 0°? It's obviously not equal. Perhaps I need some third status?

Quick summary:

- Is 359° > 1°?

- Is 180° > 0°? If not, is 180° < 0°? If not, how should this be resolved?

- Is there an easy way to find out where a line intersects with a rectangle if you know the line originates from the center of the rectangle and if you know the angle of the line?